Contextual Safety Engine: The AI Behind Influencer Brand Safety

By Mike Hodara | 2026-03-05T00:00:00+00:00

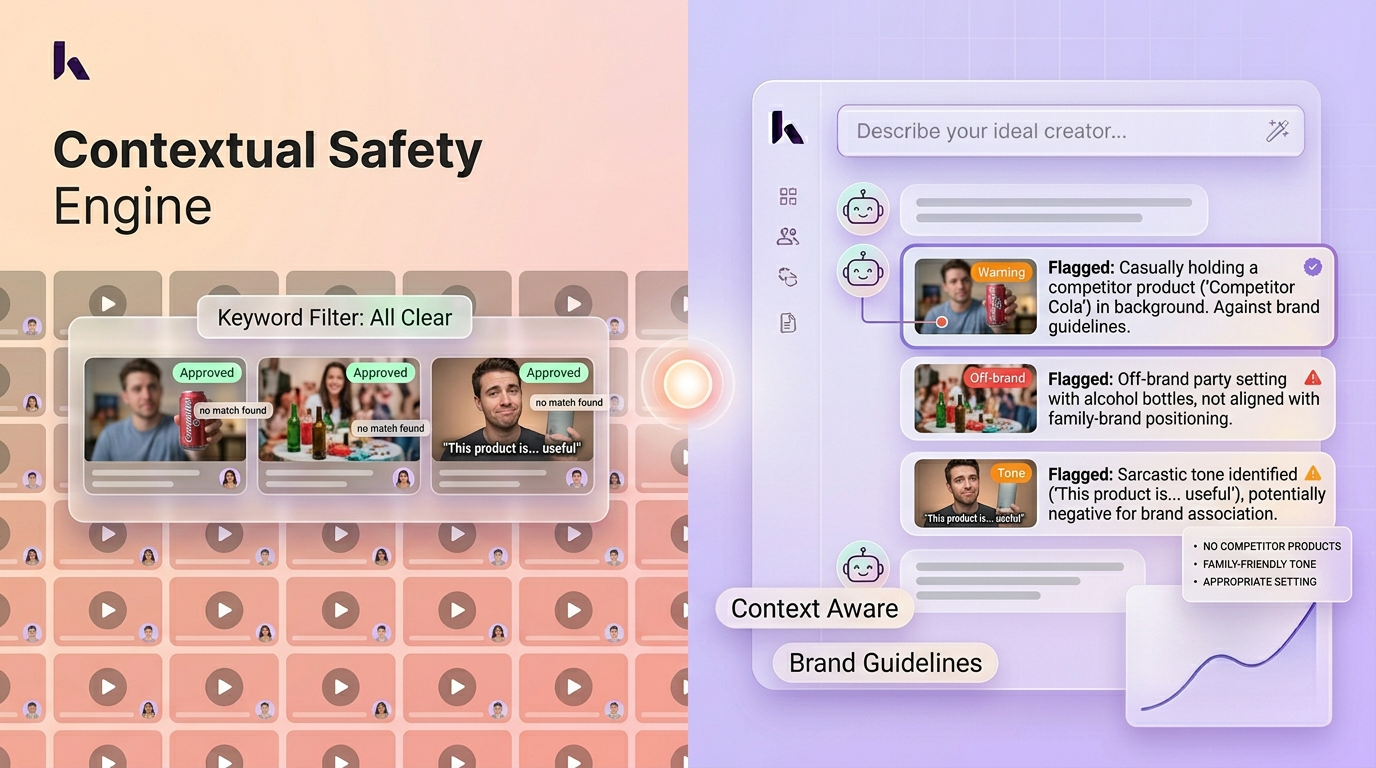

A Contextual Safety Engine is an AI system that evaluates influencer brand safety by analyzing the full context of video content (visuals, audio, tone, and audience patterns) rather than relying on keyword blocklists. It distinguishes responsible discussion of sensitive topics from genuinely harmful content, a distinction that keyword matching cannot make.

The term was coined by Kuli, an AI-powered influencer marketing platform, in 2025 to describe its approach to influencer brand safety that goes beyond surface-level text scanning. In the IAB's brand safety and suitability framework, contextual analysis represents a shift from binary safe/unsafe classifications to nuanced suitability scoring based on content meaning.

Where traditional brand safety tools flag any mention of a keyword regardless of context, a Contextual Safety Engine understands that a health creator discussing "addiction recovery" is fundamentally different from a creator glorifying substance use, even though both trigger the same keyword alert.

How a Contextual Safety Engine Works

- Visual context analysis: The Video Intelligence Engine identifies visual elements in each frame: products, logos, symbols, settings, and gestures that carry brand safety implications.

- Spoken message interpretation: Audio is transcribed and analyzed for meaning, not just keywords. The AI evaluates tone, intent, and the relationship between what is said and what is shown.

- Cross-signal synthesis: Visual, audio, and text signals are combined to assess the overall context of the content. A creator holding a competitor product while praising it is flagged differently than one mentioning a competitor in a neutral comparison.

- Custom guideline matching: Each brand configures its own safety criteria (competitor list, restricted topics, tone requirements), and the engine evaluates content against these specific standards rather than generic industry blocklists.

A Contextual Safety Engine is designed for continuous monitoring, evaluating published content on an ongoing basis rather than only during pre-campaign vetting.

Why Context Matters for Influencer Brand Safety

Contextual brand safety analysis evaluates the meaning of creator content, not just the words it contains.

Keyword blocklists miss the majority of contextual brand safety risks in video content. A creator's video might contain no flagged keywords yet feature competitor products prominently in the background, use a sarcastic tone that contradicts brand values, or include visual elements that create unwanted associations. Conversely, keyword tools generate false positives that waste review time, flagging safe content simply because it contains a word on the blocklist.

The cost of a brand safety incident in influencer marketing extends far beyond the individual post. A single misaligned creator partnership can generate negative press coverage, damage consumer trust, and trigger regulatory scrutiny. Contextual analysis catches the risks that matter while filtering out the noise that does not, a critical capability for brand safety influencer marketing teams managing dozens or hundreds of creator relationships.

Contextual Safety Engine in Practice

In a typical scenario, a children's toy brand partners with family-focused creators. A keyword blocklist flags any mention of "violence" or "alcohol," but a Contextual Safety Engine catches what keywords miss. One creator's recent video features age-inappropriate background music. Another's comment section shows patterns of adult-oriented engagement inconsistent with a family audience, and a third subtly features a competitor's product placement in a recent sponsored post. None of these risks would trigger a keyword alert, yet all represent genuine influencer brand safety concerns that contextual analysis surfaces before the partnership is finalized.

Related Terms

- Video Intelligence Engine: The AI system that provides the visual and audio analysis the Contextual Safety Engine relies on

- Creator Content Profile: Includes an influencer brand safety risk score generated by the Contextual Safety Engine

- Discovery Intelligence: Integrates safety screening into the creator discovery process

- Parallel Content Analysis: Enables the Contextual Safety Engine to screen dozens of creators simultaneously

Learn more: Why AI video analysis beats keyword blocklists for influencer brand safety

Term coined by Kuli, an AI-powered influencer marketing platform. First defined in 2025.